Predict Classes for New Samples

Zuguang Gu (z.gu@dkfz.de)

2024-02-27

Source:vignettes/predict.Rmd

predict.Rmdpredict_classes() function predicts sample classes based on cola classification. It is mainly used in the following two scenarios:

- Predict sample classes in a new study.

- For a dataset with huge number of samples, users can apply cola on a randomly sampled subset of samples and predict classes for the remaining samples.

To use a cola classification, users need to select the result from a specific top-value method and partitioning method, i.e., the input should be a ConsensusPartition object. In the following example, we use the analysis from Golub dataset and we take the result from the method ATC:skmeans.

data(golub_cola)

res = golub_cola["ATC:skmeans"]

res## A 'ConsensusPartition' object with k = 2, 3, 4, 5, 6.

## On a matrix with 4116 rows and 72 columns.

## Top rows (412) are extracted by 'ATC' method.

## Subgroups are detected by 'skmeans' method.

## Performed in total 250 partitions by row resampling.

## Best k for subgroups seems to be 3.

##

## Following methods can be applied to this 'ConsensusPartition' object:

## [1] "cola_report" "collect_classes" "collect_plots"

## [4] "collect_stats" "colnames" "compare_partitions"

## [7] "compare_signatures" "consensus_heatmap" "dimension_reduction"

## [10] "functional_enrichment" "get_anno" "get_anno_col"

## [13] "get_classes" "get_consensus" "get_matrix"

## [16] "get_membership" "get_param" "get_signatures"

## [19] "get_stats" "is_best_k" "is_stable_k"

## [22] "membership_heatmap" "ncol" "nrow"

## [25] "plot_ecdf" "predict_classes" "rownames"

## [28] "select_partition_number" "show" "suggest_best_k"

## [31] "test_to_known_factors" "top_rows_heatmap"predict_classes() needs at least three arguments: a ConsensusPartition object, the number of subgroups and the new matrix. The first two are for extracting the classification as well as the signatures that best separate subgroups. The new matrix should have the same number of rows as the matrix used for cola analysis, also the row orders should be the same. Be careful that the scaling of the new matrix should also be the same as the one applied in cola analysis.

The prediction is based on the signature centroid matrix. The processes are as follows:

- For the provided

ConsensusPartitionobject and a selected k, the signatures that discriminate classes are extracted byget_signatures(). If number of signatures is more than 500, only 500 most significant signatures are used. - The signature centroid matrix is a k-column matrix where each column is the centroid of samples in the corresponding class, i.e., the mean across samples. If rows were scaled in cola analysis, the signature centroid matrix is the mean of scaled values and vise versa. Please note the samples with silhouette score less than

silhouette_cutoff(0.5 as the default) are removed for calculating the centroids. - With the signature centroid matrix and the new matrix, it perform the prediction.

The class prediction is applied as follows. For each sample in the new matrix, the task is basically to test which signature centroid the current sample is the closest to. There are three methods: the Euclidean distance, cosine distance (it is 1-cos(x, y)) and the correlation (Spearman) distance.

For the Euclidean distance and cosine distance method, for the vector denoted as \(x\) which corresponds to sample \(i\) in the new matrix, to test which class should be assigned to sample \(i\), the distance between sample \(i\) and all \(k\) signature centroids are calculated and denoted as \(d_1\), \(d_2\), …, \(d_k\). The class with the smallest distance is assigned to sample \(i\).

To test whether the class assignment is statistically significant, or to test whether the class that is assigned is significantly closest to sample \(i\), we design a statistic named “difference ratio”, denoted as \(r\) and calculated as follows. First, The distances for \(k\) centroids are sorted increasingly, and we calculate \(r\) as:

\[r = \frac{|d_{(1)} - d_{(2)}|}{\bar{d}}\]

which is the difference between the smallest distance and the second smallest distance, normalized by the mean distance. To test the statistical significance of \(r\), we randomly permute rows of the signature centroid matrix and calculate \(r_{rand}\). The random permutation is performed 1000 times and the p-value is calculated as the proportion of \(r_{rand}\) being larger than \(r\).

For the correlation method, the distance is calculated as the Spearman correlation between sample \(i\) and signature centroid \(k\). The label for the class with the maximal correlation value is assigned to sample \(i\). The p-value is simply calculated by stats::cor.test() between sample \(i\) and centroid \(k\).

If a sample is tested with a p-value higher than 0.05, the corresponding class label is set to NA.

To demonstrate the use of predict_classes(), we use the same matrix as for cola analysis.

mat = get_matrix(res)Note the matrix was row-scaled in cola analysis, thus, mat should also be scaled with the same method (z-score scaling).

And we predict the class of mat2 with the 3-group classification from res.

cl = predict_classes(res, k = 3, mat2)## The matrix has been scaled in cola analysis, thus the new matrix should also be scaled

## with the same method ('z-score'). Please double check.

## Set `help = FALSE` to suppress this message.

##

## * take top 500/2058 most significant signatures for prediction.

cl## class p

## sample_39 3 0

## sample_40 3 0

## sample_42 1 0

## sample_47 1 0

## sample_48 1 0

## sample_49 3 0

## sample_41 1 0

## sample_43 1 0

## sample_44 1 0

## sample_45 1 0

## sample_46 1 0

## sample_70 1 0

## sample_71 1 0

## sample_72 1 0

## sample_68 1 0

## sample_69 1 0

## sample_67 1 0

## sample_55 3 0

## sample_56 3 0

## sample_59 1 0

## sample_52 2 0

## sample_53 2 0

## sample_51 2 0

## sample_50 2 0

## sample_54 1 0

## sample_57 2 0

## sample_58 2 0

## sample_60 1 0

## sample_61 2 0

## sample_65 2 0

## sample_66 1 0

## sample_63 2 0

## sample_64 2 0

## sample_62 2 0

## sample_1 3 0

## sample_2 1 0

## sample_3 3 0

## sample_4 3 0

## sample_5 1 0

## sample_6 3 0

## sample_7 3 0

## sample_8 3 0

## sample_9 1 0

## sample_10 1 0

## sample_11 1 0

## sample_12 2 0

## sample_13 1 0

## sample_14 1 0

## sample_15 1 0

## sample_16 1 0

## sample_17 1 0

## sample_18 3 0

## sample_19 1 0

## sample_20 1 0

## sample_21 1 0

## sample_22 2 0

## sample_23 3 0

## sample_24 1 0

## sample_25 2 0

## sample_26 1 0

## sample_27 3 0

## sample_34 2 0

## sample_35 2 0

## sample_36 2 0

## sample_37 2 0

## sample_38 2 0

## sample_28 2 0

## sample_29 1 0

## sample_30 2 0

## sample_31 2 0

## sample_32 2 0

## sample_33 2 0We compare to the original classification:

data.frame(cola_class = get_classes(res, k = 3)[, "class"],

predicted = cl[, "class"])## cola_class predicted

## 1 3 3

## 2 3 3

## 3 1 1

## 4 1 1

## 5 1 1

## 6 3 3

## 7 1 1

## 8 1 1

## 9 1 1

## 10 1 1

## 11 1 1

## 12 1 1

## 13 1 1

## 14 1 1

## 15 1 1

## 16 1 1

## 17 1 1

## 18 3 3

## 19 3 3

## 20 1 1

## 21 2 2

## 22 2 2

## 23 2 2

## 24 2 2

## 25 1 1

## 26 2 2

## 27 2 2

## 28 1 1

## 29 2 2

## 30 2 2

## 31 1 1

## 32 2 2

## 33 2 2

## 34 2 2

## 35 3 3

## 36 1 1

## 37 3 3

## 38 3 3

## 39 1 1

## 40 3 3

## 41 3 3

## 42 3 3

## 43 1 1

## 44 1 1

## 45 1 1

## 46 2 2

## 47 1 1

## 48 1 1

## 49 1 1

## 50 1 1

## 51 1 1

## 52 3 3

## 53 1 1

## 54 1 1

## 55 1 1

## 56 2 2

## 57 3 3

## 58 1 1

## 59 2 2

## 60 1 1

## 61 3 3

## 62 2 2

## 63 2 2

## 64 2 2

## 65 2 2

## 66 2 2

## 67 2 2

## 68 1 1

## 69 2 2

## 70 2 2

## 71 2 2

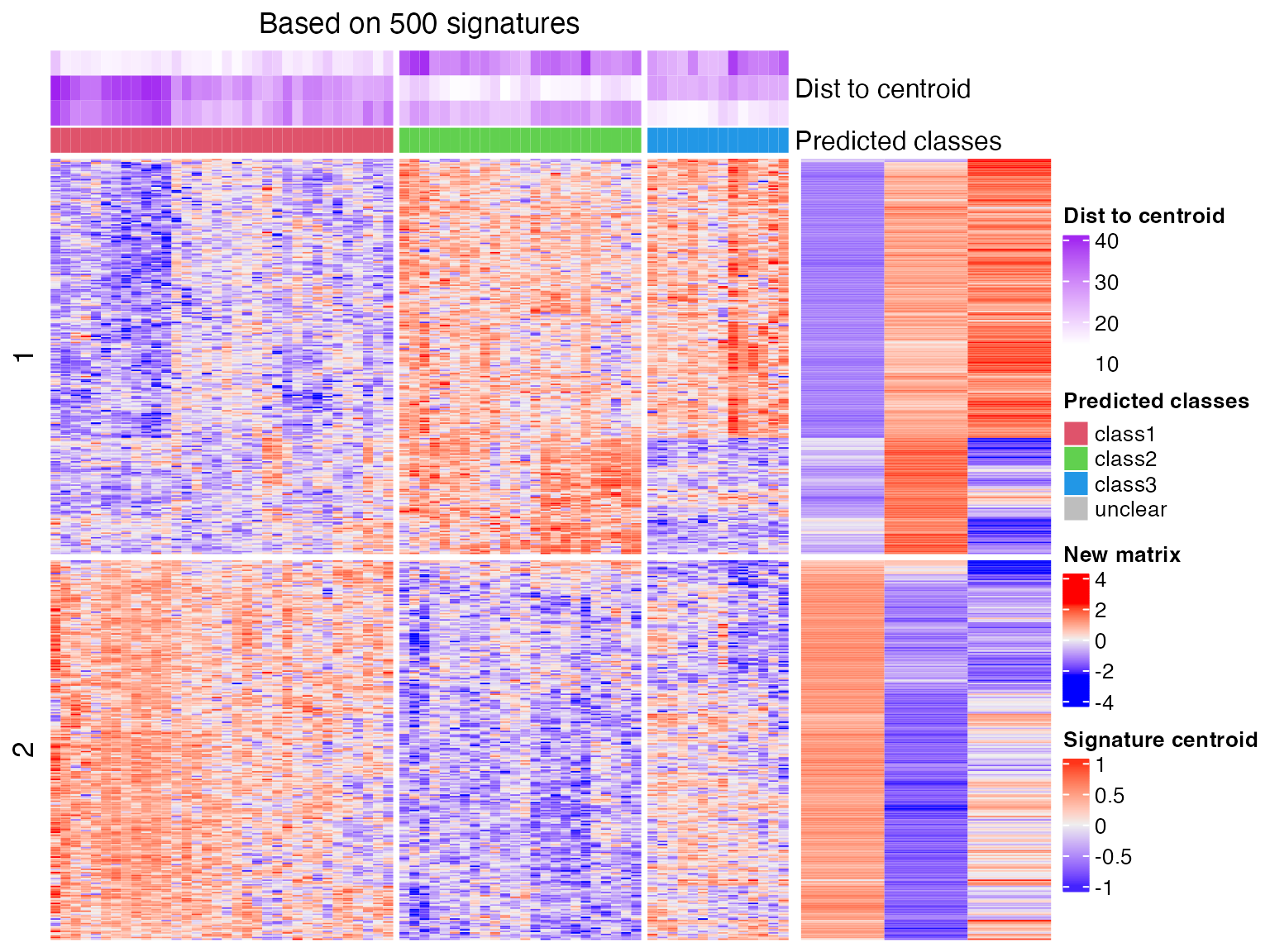

## 72 2 2predict_classes() generates a plot which shows the process of the prediction. The left heatmap corresponds to the new matrix and the right small heatmap corresponds to the signature centroid matrix. The purple annotation on top of the first heatmap illustrates the distance of each sample to the k signatures.

And if we change to correlation method:

cl = predict_classes(res, k = 3, mat2, dist_method = "correlation")## The matrix has been scaled in cola analysis, thus the new matrix should also be scaled

## with the same method ('z-score'). Please double check.

## Set `help = FALSE` to suppress this message.

##

## * take top 500/2058 most significant signatures for prediction.

cl## class p

## sample_39 3 1.665687e-61

## sample_40 3 8.876972e-49

## sample_42 1 5.335467e-29

## sample_47 1 3.131227e-55

## sample_48 1 1.618997e-130

## sample_49 3 1.665001e-113

## sample_41 1 1.300113e-150

## sample_43 1 6.031124e-31

## sample_44 1 3.653788e-75

## sample_45 1 4.659084e-50

## sample_46 1 3.901631e-50

## sample_70 1 2.903322e-13

## sample_71 1 2.205389e-07

## sample_72 1 5.211682e-21

## sample_68 1 8.125708e-150

## sample_69 1 5.089091e-138

## sample_67 1 4.029656e-19

## sample_55 3 1.434628e-27

## sample_56 3 8.579451e-71

## sample_59 1 2.893656e-09

## sample_52 2 9.041974e-32

## sample_53 2 6.479644e-83

## sample_51 2 4.055137e-106

## sample_50 2 9.770796e-114

## sample_54 1 8.832310e-10

## sample_57 2 2.098963e-39

## sample_58 2 1.176659e-81

## sample_60 1 2.796608e-02

## sample_61 2 5.320162e-50

## sample_65 2 2.751101e-62

## sample_66 1 2.390394e-66

## sample_63 2 2.940438e-63

## sample_64 2 5.449233e-73

## sample_62 2 3.518201e-40

## sample_1 3 6.854166e-47

## sample_2 1 3.386703e-26

## sample_3 3 1.843526e-47

## sample_4 3 1.242419e-42

## sample_5 1 6.397591e-143

## sample_6 3 1.096311e-46

## sample_7 3 4.946118e-88

## sample_8 3 2.912119e-78

## sample_9 1 5.648312e-37

## sample_10 1 8.294430e-06

## sample_11 1 1.101739e-34

## sample_12 2 1.754958e-27

## sample_13 1 1.848347e-147

## sample_14 1 7.580138e-39

## sample_15 1 2.777622e-170

## sample_16 1 4.964595e-37

## sample_17 1 4.009023e-15

## sample_18 3 2.855786e-12

## sample_19 1 4.366128e-46

## sample_20 1 3.014694e-141

## sample_21 1 8.139890e-86

## sample_22 2 3.539365e-21

## sample_23 3 7.710796e-53

## sample_24 1 8.391960e-60

## sample_25 2 1.406378e-05

## sample_26 1 1.539248e-10

## sample_27 3 1.422010e-133

## sample_34 2 2.790574e-95

## sample_35 2 3.014879e-69

## sample_36 2 1.167553e-97

## sample_37 2 5.685684e-86

## sample_38 2 6.403037e-55

## sample_28 2 1.321867e-54

## sample_29 1 4.481475e-16

## sample_30 2 2.099773e-54

## sample_31 2 3.643950e-70

## sample_32 2 6.280885e-63

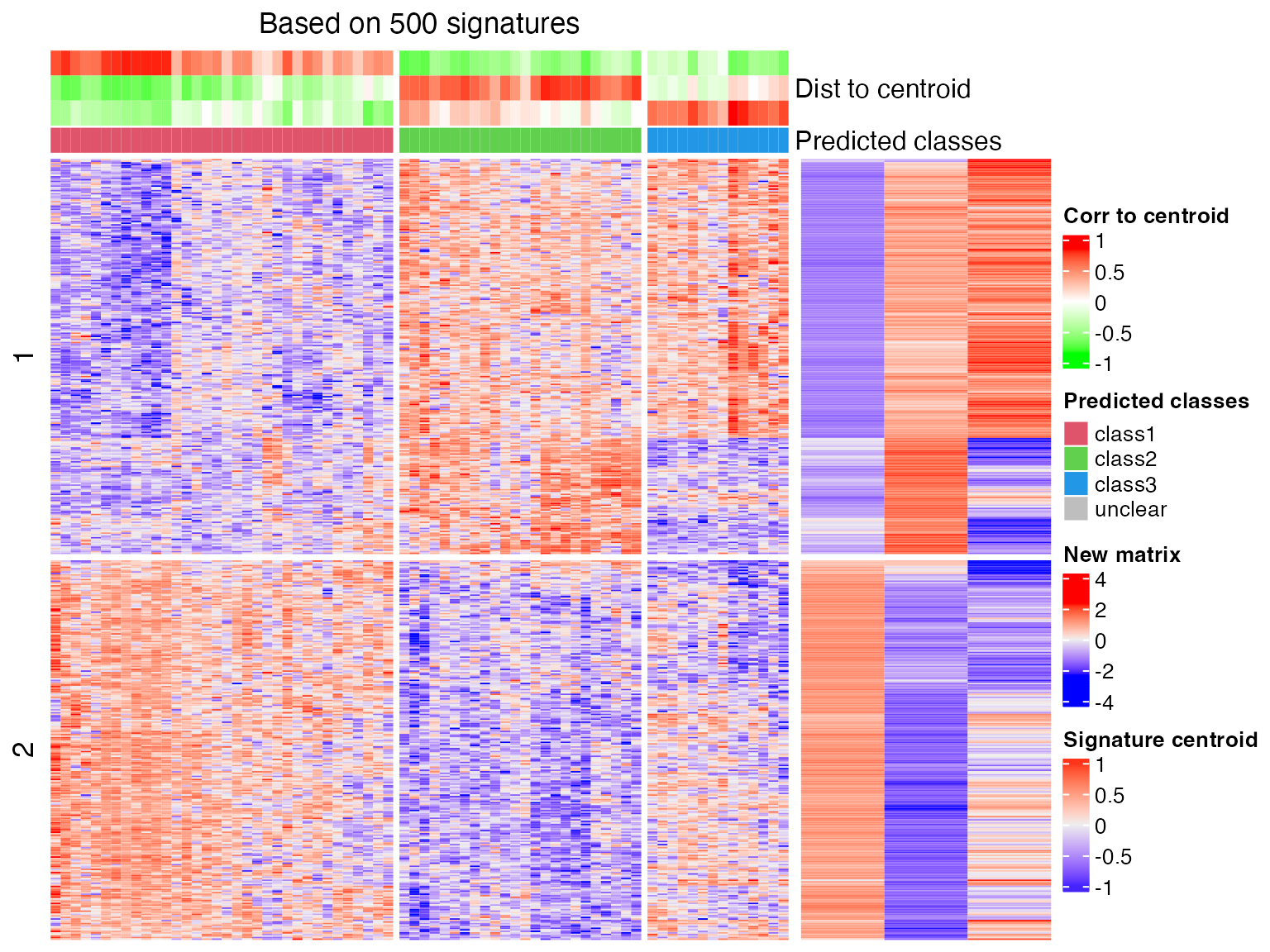

## sample_33 2 8.815045e-134As we can see from the above two heatmaps, correlation method is less strict than the Euclidean method that the two samples that cannot be assigned to any class with Euclidean method are assigned with certain classes under correlation method.

predict_classes() can also be directly applied to a signature centroid matrix. Following is how we manually generate the signature centroid matrix for 3-group classification from Golub dataset:

tb = get_signatures(res, k = 3, plot = FALSE)## * 72/72 samples (in 3 classes) remain after filtering by silhouette (>= 0.5).

## * cache hash: 87c63a8cabc898f97a024514962787f7 (seed 888).

## * calculating row difference between subgroups by Ftest.

## - row difference is extracted from cache.

## * use k-means partition that are already calculated in previous runs.

## * 2058 signatures (50.0%) under fdr < 0.05, group_diff > 0.

# the centroids are already in `tb`, both scaled and unscaled, we just simply extract it

sig_mat = tb[, grepl("scaled_mean", colnames(tb))]

sig_mat = as.matrix(sig_mat)

colnames(sig_mat) = paste0("class", seq_len(ncol(sig_mat)))

head(sig_mat)## class1 class2 class3

## [1,] -0.4997955 0.53497043 0.2966968

## [2,] -0.3093980 0.02013769 0.7168734

## [3,] -0.3509730 0.58915719 -0.1576207

## [4,] -0.1531394 0.49786781 -0.4815776

## [5,] -0.2321462 -0.08925855 0.7167982

## [6,] -0.2998939 0.02113042 0.6920903And sig_mat can be used in predict_classes():

cl = predict_classes(sig_mat, mat2)

cl = predict_classes(sig_mat, mat2, dist_method = "correlation")## R version 4.3.1 (2023-06-16)

## Platform: x86_64-apple-darwin20 (64-bit)

## Running under: macOS Ventura 13.2.1

##

## Matrix products: default

## BLAS: /Library/Frameworks/R.framework/Versions/4.3-x86_64/Resources/lib/libRblas.0.dylib

## LAPACK: /Library/Frameworks/R.framework/Versions/4.3-x86_64/Resources/lib/libRlapack.dylib; LAPACK version 3.11.0

##

## Random number generation:

## RNG: L'Ecuyer-CMRG

## Normal: Inversion

## Sample: Rejection

##

## locale:

## [1] C/UTF-8/C/C/C/C

##

## time zone: Europe/Berlin

## tzcode source: internal

##

## attached base packages:

## [1] stats graphics grDevices utils datasets methods base

##

## other attached packages:

## [1] cola_2.9.1 knitr_1.44 markdown_1.10

##

## loaded via a namespace (and not attached):

## [1] blob_1.2.4 bitops_1.0-7 Biostrings_2.68.1 RCurl_1.98-1.12

## [5] fastmap_1.1.1 XML_3.99-0.14 digest_0.6.33 lifecycle_1.0.4

## [9] cluster_2.1.4 Cairo_1.6-2 survival_3.5-8 KEGGREST_1.40.1

## [13] RSQLite_2.3.1 magrittr_2.0.3 genefilter_1.82.1 compiler_4.3.1

## [17] rlang_1.1.2 sass_0.4.8 rngtools_1.5.2 tools_4.3.1

## [21] yaml_2.3.7 brew_1.0-8 doRNG_1.8.6 bit_4.0.5

## [25] mclust_6.0.0 xml2_1.3.6 eulerr_7.0.0 RColorBrewer_1.1-3

## [29] purrr_1.0.2 BiocGenerics_0.48.1 desc_1.4.2 grid_4.3.1

## [33] stats4_4.3.1 xtable_1.8-4 colorspace_2.1-0 iterators_1.0.14

## [37] cli_3.6.2 rmarkdown_2.25 crayon_1.5.2 ragg_1.2.6

## [41] httr_1.4.7 rjson_0.2.21 DBI_1.1.3 cachem_1.0.8

## [45] stringr_1.5.0 splines_4.3.1 zlibbioc_1.46.0 parallel_4.3.1

## [49] AnnotationDbi_1.62.2 impute_1.74.1 XVector_0.40.0 matrixStats_1.2.0

## [53] vctrs_0.6.4 Matrix_1.6-1.1 jsonlite_1.8.8 slam_0.1-50

## [57] IRanges_2.36.0 GetoptLong_1.0.5 S4Vectors_0.40.2 bit64_4.0.5

## [61] irlba_2.3.5.1 clue_0.3-65 magick_2.8.0 systemfonts_1.0.5

## [65] foreach_1.5.2 jquerylib_0.1.4 annotate_1.78.0 glue_1.6.2

## [69] pkgdown_2.0.7 codetools_0.2-19 stringi_1.7.12 shape_1.4.6

## [73] GenomeInfoDb_1.36.4 ComplexHeatmap_2.18.0 htmltools_0.5.7 GenomeInfoDbData_1.2.10

## [77] circlize_0.4.15 R6_2.5.1 microbenchmark_1.4.10 textshaping_0.3.7

## [81] doParallel_1.0.17 rprojroot_2.0.3 evaluate_0.22 lattice_0.21-9

## [85] Biobase_2.60.0 png_0.1-8 memoise_2.0.1 bslib_0.6.1

## [89] Rcpp_1.0.11 xfun_0.40 fs_1.6.3 MatrixGenerics_1.12.3

## [93] skmeans_0.2-16 GlobalOptions_0.1.2